When choosing a pressure sensor, one of the many factors to consider is its measuring range. Having the optimal range avoids the risk of overpressure without a loss of accuracy or resolution.

Pressure sensors play a key role in the safety and efficiency of countless applications, from hydraulics and waterjet cutting to hydrostatic level measurement. When deciding which sensor and configuration are right for a particular application, users should consider the following:

- Accuracy

- IP or NEMA rating (to prevent moisture-related damage)

- Cost per unit

- Measuring range

The Importance of Pressure Sensor Measuring Range

There is a sweet spot when it comes to arriving at the optimum measuring range for pressure sensors. If the upper range is not high enough to account for overpressure, there is the risk of overloading the sensing element and damaging it. The result would be pressure sensor failure.

But if the measuring range is much too wide, the result could be compromised accuracy or a too-low output signal.

What to Know about Pressure Range for Pressure Sensors

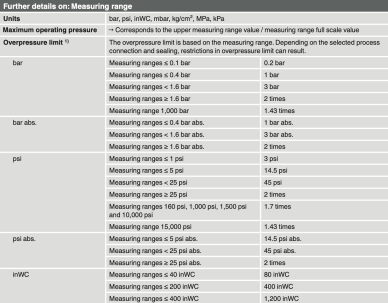

Data sheet for the A-10 pressure transmitter, with details on the measuring range (click to enlarge)

Some manufacturers’ data sheets for pressure sensors list three different values regarding the pressure range: maximum operating/working pressure, overpressure limit, safe operating pressure, burst pressure, etc. (Note: Different manufacturers may use slightly different terms.) However, the only ones that really matters are the maximum operating/working pressure, sometimes called the rated operating pressure, and the overpressure limit. If those are exceeded, the accuracy specifications listed in the data sheet are no longer guaranteed.

WIKA’s data sheets for pressure sensors, like the A-10 pressure transmitter and S-20 superior pressure transmitter, list these two key measuring ranges:

- Maximum operating/working range, which corresponds to the upper measuring range value / measuring range full scale value.

- Overpressure limit, which is based on the measuring range. This limit varies depending on the selected process connection and sealing.

The Measuring Range and Its Effect on Accuracy

What happens if you select a too-wide range, such as if your application goes up to just 250 psi but the pressure sensor has a measuring range of 0 … 1,000 psi? In most cases, a too-high upper range is not too serious. This is because most of the accuracy parameters – linearity, repeatability, hysteresis, etc. – are in relation to the actual reading or value, not to the full scale. In data sheets, their maximum value is defined and expressed as a percentage of the rated pressure range. The error in service actually “scales down” with usage.Non-linearity error with relation to measuring range

For example, if the non-linearity is defined as ≤ ±0.1% of span, it means that a 0 … 1,000 psi pressure sensor can experience a maximum of 1 psi deviation from the ideal line; this is expected to happen around the mid-point of the range – 500 psi in this case. But when you measure only 250 psi with the same transmitter, the linearity is expected to deviate not by 1 psi, but by only a fraction of that. In fact, if you use a 0 … 1,000 psi sensor to measure just pressures from 0 to 500 psi, the expected non-linearity error between 0 and 500 psi is about 0.25 psi, and is expected to peak somewhere around 250 psi.

Zero-point error with relation to measuring range

The only errors that do not scale down are those related to the zero point of the sensors: 1) zero point accuracy/calibration and 2) temperature effect on zero (TC zero, or temperature coefficient on zero). In the data sheet, both are defined as a percentage of full scale, and their absolute value remains constant for the individual sensor – independent of how much of the range is used.

In our example of the 0 … 1,000 psi pressure sensor, let’s assume a ±0.5% error on zero = 5 psi. Whether you measure 1,000 psi or 500 psi or 10 psi, you can expect an offset in zero of up to 5 psi in a specific sensor. The practical implication is that there’s very little offset, as most sensors are “zeroed” when hooked up to a meter or programmable logic control (PLC) during installation.

Signal resolution with relation to measuring range

The only other limitation to be aware of is the resolution of the receiving electronics, such as the PLC’s analog input card or the input of the meter or display. In most cases, this is no longer a problem as they typically provide a resolution of 12 bit and beyond.

Let’s consider again the example of using a 0 … 1,000 psi sensor for a 0 … 250 psi application. In this case, the user would still get a 10-bit resolution (in the case of a 12-bit A/D) providing 0.1% resolution from 0 to 250 psi (= 0.25 psi).